‘Ultimately, you feel empty’: India’s women workers exposed to hours of harmful content to train AI

On the veranda of her family’s house, Monsumi Murmu sits with her laptop precariously placed on a mud slab embedded in the wall, working from one of the few spots in her village where the mobile signal is strong enough to support her tasks. Inside the home, the sounds of daily life resonate: the rhythmic clattering of utensils, the patter of footsteps, and the murmur of voices create a familiar backdrop to her activities.

However, the screen before her displays a far more unsettling reality: a video that captures the tragic scene of a woman being subdued by a group of men, complete with a shaky camera, chaotic shouting, and heavy breathing. Overwhelmed by the horrifying imagery, Murmu increases the playback speed but knows her role requires her to examine the footage until the conclusion.

At just 26 years old, Murmu holds the position of a content moderator for a multinational technology firm, logging onto her account from Jharkhand, India. Her responsibilities involve assessing images, videos, and text flagged by automated systems for potential violations of the platform’s policies.

Each day, she typically reviews around 800 videos and images, making evaluations that help train algorithms to recognize various forms of violence, abuse, and harmful content.

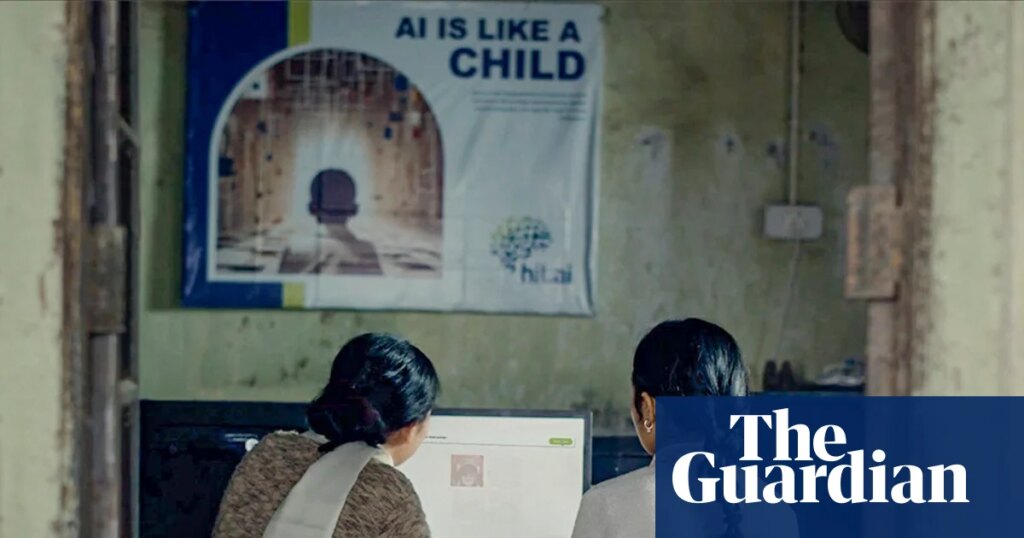

This kind of work is central to the recent advancements in machine learning, which fundamentally hinge on the concept that the quality of AI is determined by the data it is trained upon. In India, the labor force performing this essential task is increasingly composed of women, often referred to as “ghost workers”.

Reflecting on her initial experiences, Murmu recalls, “The first few months, I couldn’t sleep. I would close my eyes and still visualize the screen loading.” Disturbing images haunted her dreams—scenes of tragic accidents and incidents of sexual violence that she felt powerless to prevent. On particularly difficult nights, her mother would wake up to comfort her.

As time has passed, she indicates that the shock of the images has faded; “By the end, you don’t feel disturbed – you feel blank.” Yet, there remain some nights when the disturbing dreams resurface, revealing the profound impact of her job: “That’s when you know the job has done something to you.”

Experts suggest this emotional detachment, often followed by delayed psychological repercussions, characterizes the nature of content moderation work. “There may be moderators who escape psychological harm, but I’ve yet to see evidence of that,” states Milagros Miceli, a sociologist leading the Data Workers’ Inquiry, a project examining the roles of workers in AI.

Miceli continues, “In terms of risk, content moderation belongs in the category of dangerous work, comparable to any lethal industry.”

Research indicates that content moderation generates lasting psychological stress, often manifesting as behavioral shifts like increased vigilance. Workers frequently report experiencing intrusive thoughts, heightened anxiety, and disruptions in sleep patterns.

A study of content moderators released in December 2021, which included participants from India, pinpointed traumatic stress as the most significant psychological risk. The findings revealed that even when workplace support structures existed, substantial levels of secondary trauma persisted among workers.

By 2021, an estimated 70,000 individuals in India were engaged in data annotation, a sector valued at approximately $250 million (£180 million), primarily driven by the nation’s IT sector, according to the industry body Nasscom. Findings indicated that around 60% of the revenue generated originated from the United States, while only 10% stemmed from domestic sources.

Roughly 80% of those involved in data annotation and content moderation come from rural or marginalized backgrounds. Companies frequently establish operations in smaller towns, where living costs and rent are significantly lower and a growing number of first-generation graduates are actively seeking employment.

The improved internet connectivity has facilitated the integration of these locales into global AI supply chains without necessitating workers to relocate to urban centers.

Women represent a substantial portion of this workforce. Employers often view women as dependable, detail-oriented, and more inclined to accept contract or home-based roles perceived as “respectable” and “safe.” These jobs create a rare opportunity for income without requiring migration.

Many individuals in these labor hubs hail from Dalit and Adivasi (tribal) communities. For them, digital work signifies a notable advancement, offering cleaner, more stable, and better-paying alternatives compared to traditional agricultural labor or mining jobs.

Working remotely or in nearby locations sometimes further entrenches women’s marginality, according to Priyam Vadaliya, a researcher specializing in AI and data labor, who previously worked with the Aapti Institute in Bengaluru. She explains, “The respectability associated with this job, coupled with its rarity as a paid opportunity, often creates an atmosphere of gratitude expectations. This can deter workers from addressing the psychological damage it inflicts.”

Raina Singh, who was just 24 when she began working in data annotation, had plans to pursue a teaching career after graduating. However, she felt a pressing need for a stable monthly income before chasing that dream.

Returning to her hometown of Bareilly in Uttar Pradesh, she logged onto her tasks every morning from her bedroom, employed by a third-party firm contracted with global tech platforms. The pay—approximately £330 a month—was attractive, and even if the job description remained vague, the work seemed manageable.

Initially, Raina’s tasks involved reviewing text messages, flagging spam, and recognizing scam patterns. “It didn’t feel alarming,” she reflects. “More tedious than anything else. Yet, there was an exciting element to it. I felt like I was participating in the backend of AI; while my friends only knew it through ChatGPT, I was exposed to what really powers it.”

However, six months into the job, her assignments shifted abruptly to a project connected to an adult entertainment platform tasked with identifying and removing content related to child sexual abuse.

“I had never imagined that this would become part of the role,” she expresses. The graphic and relentless nature of the material shocked her. When she raised her concerns with her manager, she was met with a curt message: “This is God’s work—you’re protecting children.”

Soon after, the nature of her assignments evolved again. Raina and several teammates were instructed to categorize a plethora of pornographic content. “I can’t even count how much porn I was subjected to,” she admitted. “It was non-stop, hour after hour.”

The negative effects of this work seeped into her personal life. The concept of intimacy became repugnant to her: “The thought of sex started to genuinely distress me,” she shares. This overwhelming exposure led her to withdraw from physical closeness with her partner, resulting in feelings of alienation.

When she expressed her discomfort to her supervisors, the response was dismissive: “Your contract outlines data annotation—this is data annotation.” Eventually, she resigned, but a year later, even the thought of intimacy triggers emotional distress or disassociation for her. “Sometimes, when I’m with my partner, I feel alien in my own skin. I crave closeness, yet my mind pushes away,” she explains.

Vadaliya highlights that job descriptions rarely clarify the nature of the work involved. “People are recruited under vague titles; only post contract signing and training do they fully realize what is expected of them.”

The remote, part-time roles are commonly promoted online as “effortless cash” or “zero-investment” opportunities, widely circulated via YouTube videos, LinkedIn posts, and through influencer-driven content that brands the work as flexible and low-risk.

Upon consulting eight data-annotation and content-moderation companies in India, only two admitted to providing psychological assistance to workers; the remaining companies contended that the nature of the work did not warrant mental health support.

Vadaliya reiterated that when support is offered, the responsibility falls on the individual to seek it out, placing the onus of care onto the already burdened workers. “Many data workers, particularly those from remote or underprivileged backgrounds, may lack the vocabulary to articulate what they are experiencing,” she observed.

In addition, the absence of legal acknowledgment of psychological trauma in the framework of India’s labor laws leaves these workers without substantial protections.

The emotional burden is further compounded by a sense of isolation. Those working as content moderators and data annotators are bound by stringent non-disclosure agreements (NDAs) that prevent them from discussing their tasks, even with close family and friends. Breaching these NDAs can lead to termination or legal consequences.

Murmu has expressed concern that if her family were to learn about her job, she might face societal pressures similar to many girls in her village, which would force her into an arranged marriage instead of allowing her to continue her employment.

Now, as she approaches the end of her contract, with just four months remaining during which she earns roughly £260, the fear of unemployment is stronger than her worries about her mental well-being. “The anxiety of securing another job overwhelms me more than the work itself,” she admits.

For now, she has developed coping mechanisms to soothe her distress. “I enjoy long walks in the forest, sit under the open sky, and focus on the tranquility surrounding me.”

Sometimes, she finds solace in collecting minerals from nearby areas or painting intricate, traditional patterns on her home’s walls. “I’m uncertain if these activities will resolve anything,” she confides, “but they certainly provide me with a fleeting sense of relief.”

Interested in growing your brand with smarter solutions? Get in touch with Auctera today.