“‘Coffee Serves as a Cover’: The Deaf-Operated Café Where Hearing Customers Use Sign Language to Order”

Wesley Hartwell raised his fists enthusiastically to the barista, shaking them beside his ears in a playful manner. Following that, he lowered his hands, extending his thumbs and little fingers, mimicking an act of milking a cow by moving his fingers rhythmically near his chest. Finally, with a touch of flair, he laid the fingers of one hand juxtaposed against his chin, flexing his wrist in a manner that further encapsulated his expressive order.

Hartwell, who has no issues with hearing, had just utilized BSL, British Sign Language, to place his morning order for a latte with regular milk at the Dialogue Cafe, a unique space run by deaf individuals situated at the University of East London. He expressed his gratitude to Victor Olaniyan, the barista who is also deaf.

“Honestly, I must confess that when this cafe opened near my workplace, I was hesitant to visit it because the entire concept made me quite anxious,” said Hartwell, a university lecturer. “However, I’ve become quite intrigued. Sign language is incredible. I’m considering enrolling in a course to deepen my understanding.”

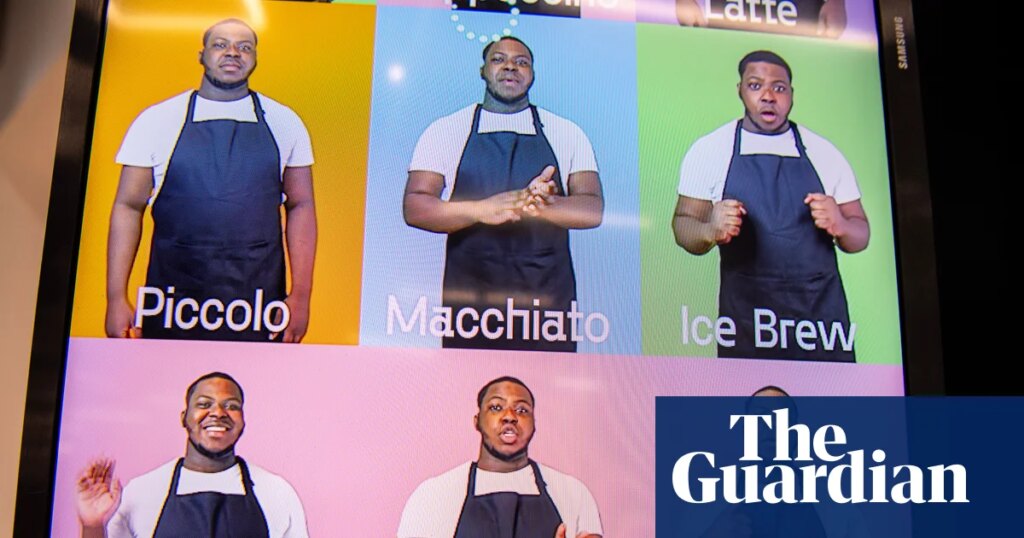

What encouraged Hartwell to try using BSL was the cafe’s innovative touchscreen menu. Unlike traditional menus that merely list beverages and pastries, these interactive menus feature videos demonstrating their BSL translations, making it easier for all customers to engage.

For many users of BSL, having direct access like this is essential, as BSL serves as the primary language for tens of thousands in the UK.

Olaniyan, who has been part of the cafe’s team for five years while pursuing a degree in accounting and management at the University of Reading, observed the reactions of hearing patrons to the video menu with mild amusement.

“I was raised in a hearing household, so I find it easy to navigate the hearing world,” he conveyed through sign. “Yet, many hearing individuals often seem uneasy when communicating with us. If this technology helps lessen that anxiety, then that’s fantastic, but I’m fine just the way I am.”

In recent years, the surge in the development of digital and AI-driven tools aims to ameliorate communication barriers between the deaf and hearing communities. Innovations range from signing avatars to advanced generative models intended to compete with major AI platforms.

Despite the advancement, independent evaluations of these tools remain scarce. Sign language researchers caution that existing tools often fall short regarding linguistic subtleties, regional differences, and contextual variations, especially in high-stakes situations like healthcare or legal matters.

Nevertheless, the aspirations are impressive: Silence Speaks, a UK startup, has created an avatar-based platform that converts text into BSL, asserting it conveys contextual significance and emotional subtleties.

Meanwhile, the British project SignGPT, with £8.45 million in funding, is focused on crafting models that facilitate two-way translation between BSL and English, alongside building what they claim to be the world’s largest dataset of sign languages.

International collaboration is on the rise as well, with initiatives like a £3.5 million UK-Japan project aimed at developing systems trained on authentic deaf-to-deaf conversation data rather than relying on interpreter recordings.

This rapid progress is particularly striking. Professor Bencie Woll, a collaborator on the SignGPT initiative at the University College London’s Deafness, Cognition and Language Research Centre, noted how limited communication options for deaf individuals were when she began her career in BSL research.

“While the world advanced technologically, the deaf community often lagged behind,” she remarked. “Now, we’re witnessing a remarkable acceleration. The opportunities available to the deaf community over the past couple of years have been transformative.”

However, Woll expressed that technology has not always been a straightforward boon. “There’s been a persistent misconception, particularly among researchers unfamiliar with sign languages, that a simple conversion from sign language to written English would magically resolve the challenges faced by deaf individuals,” she explained.

This perception resulted in several inefficient technological developments, including wearable translation suits and cumbersome gloves designed to translate signs inaccurately.

“All of those inventions ultimately failed,” she said. “They were crafted by individuals lacking a deep understanding of sign languages, who never consulted with the deaf community during the development process. This has led to ongoing frustration within the community over the abundance of inferior solutions.”

Yet, the quest for effective solutions continues. Globally, approximately 70 million people are deaf or hard of hearing. In the UK, census data reveals around 151,000 BSL users, with approximately 25,000 of them using BSL as their primary language. BSL is a natural language with its own distinct grammar and structure, devoid of reliance on spoken English.

For this segment, English often becomes a secondary or tertiary language, following lip-reading or individualized gestures crafted within the family.

This linguistic landscape has profound implications: subtitles or written content sometimes fail to serve as sufficient replacements for direct BSL communication. A comprehensive study carried out in 2017, focusing on deaf children between the ages of 10 and 11, highlighted that a significant number of participants – 48% of those solely taught through spoken language and 82% of those whose primary mode of communication was sign language – exhibited reading abilities below the anticipated levels for their age group.

In an unusual move, Dr. Lauren Ward leads AI technology initiatives for the deaf community at the Royal National Institute for Deaf People (RNID), advising both governmental bodies and industries on these matters.

“The rapid pace of development has prompted RNID to hire engineers,” she remarked, highlighting the immense potential for assisting the deaf community while acknowledging the risk of unintended consequences. “The opportunity for advancement is enormous, but we also face substantial risks.”

History shows that deaf individuals have consistently embraced technology, evidenced by the transformative impact of SMS messaging in the 1990s. However, Ward pointed out that recent years are witnessing an intensified interest and scrutiny. “What was once confined to academic settings has swiftly transitioned into startup environments and commercial products,” she stated.

This shift was facilitated by advancements in machine learning and other technologies that now allow for large-scale processing of sign languages in a practical sense.

A combination of increased funding for research, enhanced datasets, and greater participation from deaf researchers has contributed to this acceleration. Additionally, public acknowledgment of the persistent gap between the legal rights of deaf individuals and the services they receive has intensified focus on delivering reliable sign language provisions—something that has too often been promised but not realized.

This confluence of potential opportunities and risks makes for a precarious moment, as noted by Dr. Ward.

“There’s a significant amount of excitement surrounding the potential for real improvements in the next five years,” she said. “Nonetheless, there’s a real concern that private entities might prioritize profitability over collaboration with the deaf community.”

Dr. Maartje De Meulder, a deaf scholar focused on AI related to sign language, echoed these sentiments, underscoring the urgency of the situation.

“Currently, a large portion of online content remains inaccessible to deaf individuals, encompassing everything from educational resources to government websites,” she mentioned. “Since nobody can feasibly translate the entire internet into sign languages, even partial solutions could yield remarkable changes.”

Neil Fox, a deaf research fellow at the University of Birmingham, affirmed that if the quality of avatar translation reaches an acceptable level, it could significantly enhance access to online environments that are currently restricted for deaf users.

However, they all proceed with caution. Rebecca Mansell, the CEO of the British Deaf Association, stressed the importance of involving deaf individuals throughout the process. “This has become a highly lucrative sector, and the engagement of deaf community members can all too often be superficial,” she noted.

“The rapid advancements pose a serious threat, as solutions might be imposed without genuine consideration for our needs,” she added, raising valid concerns regarding the appropriateness and regulation of these technologies. “While an avatar might suffice for straightforward tasks like placing orders, critical scenarios such as receiving a cancer diagnosis merit human interaction.”

Dr. Louise Hickman, from the Minderoo Centre for Technology and Democracy and lead author of the report on “BSL Is Not For Sale”, pointed out the lack of sophistication in many companies’ claims of providing solutions without grasping the intricacies of BSL.

“Current avatar systems still cannot replicate the nuanced understanding offered by human interpreters, which heightens risks in medical and legal contexts,” she stated.

Hickman also highlighted another hurdle: “There’s a notable lack of diverse data. British Sign Language is distinct from other regional sign languages, including Irish or American Sign Language, and there are various dialects within different parts of the UK. As such, the data available for training these AI systems is extremely limited.”

“The deaf community is enthusiastic about innovation,” she concluded, “but we need to ensure we can influence its direction to make absolutely certain that it serves our interests.”

Turning back to the cafe, Hakan Elbir, its founder, expressed skepticism regarding the necessity for more advanced tools beyond his straightforward BSL video menu.

“A lot of chatter surrounds innovation, yet for most deaf individuals, it’s still quite abstract,” he stated. “What I truly desired was a means for meaningful interactions between hearing and deaf individuals.”

“After all, coffee is simply a means to an end,” he added with a smile. “I never relied on complicated technology to dismantle barriers; I merely needed people to engage authentically.”

As Hartwell waited for his latte at the counter, he quietly practiced the sign for “flat white,” exemplifying how these simple human interactions, underpinned by technology but not overshadowed by it, were compelling him to return to the cafe, one signed coffee order at a time.

Interested in growing your brand with smarter solutions? Get in touch with Auctera today.